LoginVSI Pre-Flight Checks

In the previous blog post, I discussed how LoginVSI can help benchmark your VDI or SBC environment and provide some performance metrics on where the performance bottlenecks are likely to occur when the solution is heavily loaded. As discussed previously, you’ll have the following components set up and configured:-

- LoginVSI share (hosted on a Windows Server or Samba share where the Windows 7 20 concurrent connection restriction does not apply)

- LoginVSI Launcher workstations (with the Launcher setup run in advance)

- LoginVSI Target desktop pools (with the Target setup run in advance and Microsoft Office installed)

- Active Directory script run to configure the required LoginVSI users and groups and add the Group Policy settings to those users (turns off UAC, amongst other things)

- Ensure statistics logging is working properly on vCenter (assuming a vSphere infrastructure)

Once the environment has been configured and you have your pool of desktops spun up, it is recommended that all virtual desktops be left to “sit” idle for a while, this is so that they reach “steady state” before the tests commence. Steady state is essentially where all desktops have started, launched all start up services (anti-virus scanners, “call home” services or applications, Windows services) and disk activity has settled down to an idle tick, rather than thrashing as it does when it starts. What’s worth bearing in mind is that if all virtual desktops are on the same datastore that it may take several minutes for steady state to be reached, depending on disk latencies. In my particular tests, I had between 100-120 desktops spun up at once and I left the pool to sit for around 20 minutes before running any LoginVSI workloads.

How do you know if steady state has been reached? I used the vSphere client to look at CPU and memory usage of each virtual machine and waited until the utilisation dropped down to a minimum. After a few test runs, you will start to get an idea of where steady state is, as each desktop build is slightly different, depending on applications and services installed. It’s not imperative you do this, but if you read the white papers produced by the major VDI stack vendors (Microsoft, Citrix, VMware, NetApp etc.), you will find this is something they tend to do.

At this stage, it’s often prudent to perform a few test runs, just to ensure that everything is running as you expect. You can also use these test runs to perform some workload tuning, such as time delays between sessions starting. As discussed in the previous post, if you set this value too aggressively, you can saturate your hypervisor host very quickly, and this can negatively skew results. Plus, is this the reality of how your users will use your VDI environment? Is it likely that you will have 100 users logging in a near simultaneous manner in a three or four minute window? In most cases you’d probably say no. The obvious exception to this would be an educational environment, in which dozens (even hundreds in a University or College setting) of users would login at the same time and start several applications after login. In a commercial or non-academic environment, generally users login over a much larger time frame and even when they’re logged in, they are far more inclined to make long phone calls or make a coffee, resulting in significant periods of idle time.

As a tip, use the calculator built into the Management Console to compute the time delays between the number of sessions and make sure they represent “real life” numbers, such as a login every 6 minutes etc.

During my testing with a customer, we would make a single environmental change and then analyse the results – for example, changing the amount of memory given to the virtual desktops (1.5GB vs 2GB, for example), or an extra vCPU, or a change to the underlying storage fabric. In this respect, LoginVSI can also be used to model environmental changes, a “what if” type of analysis. This can be especially useful if you are conducting a performance analysis of new storage to validate a vendor’s claims, or a “what if we add 20 more virtual desktops to this host” scenario.

VSIMax

The end goal is the result of the VSI Max, which is essentially the “tipping point” of performance. This is established in a way that I still don’t truly understand (and I read the explanation several times!), but in essence is calculated by capturing the delay intervals in between performing tasks in the target workload. There are embedded timers within the workloads that spawn activities such as reading Outlook messages, or playing a Flash video and the intervals between activities are randomised, so as to imitate real life usage. A baseline average response time is calculated and when delays increase, the VSI Max value is obtained. This value basically represents the maximum number of virtual desktops per host before performance significantly degrades.

In our particular test case, we were looking to achieve a density of 100 desktops per vSphere blade. This figure was reached after a capacity planning exercise – so VMware’s Capacity Planner was deployed to a bunch of workstations in a “knowledge worker” use case – users who generally have medium to high task demands – using Outlook to send messages, opening large spreadsheets, manipulating graphics intensive slide decks etc. As a result, 100 desktops was considered an appropriate density based on the Capacity Planner results and the specification of the hypervisor hardware.

The VSIMax validates the design of the solution and gives both the solution architect and the end users/customers confidence that the VDI solution is fit for purpose. The graphic below shows the output from three tests run that validate the design for 100 desktops. You will need to install the VSI Analyser to compare the results, using the Comparison Wizard:-

Running The Tests

I’d recommend running at least three iterations of your test cycle to ensure a reliable result. What you should find is that each result is generally quite close together and this way you can average out the VSIMax over the three runs of the test. That being said, on odd occasions you may see freak results (generally at the lower end of the performance spectrum) and it’s worth discarding this result and performing another test iteration. This can happen for a variety of reasons, such as the pool not being in a steady state, for example. Several simultaneous power cycle operations on a pool can cause performance degredation.

Analysing Bottlenecks

So let’s say you’ve built your solution to meet the needs of a 100 simultaneous virtual desktop connections, but your VSIMax figure averages out well below that figure (worryingly so!). Where do you go from here? At this stage, this is where performance of the hypervisor host comes into play. In our particular test, the hypervisor in use is vSphere. This is good because vCenter automatically collects performance statistics and stores them in the database, so we don’t need to babysit real time statistics to know where the bottleneck is, we can just look back restrospectively in vCenter.

The main areas to look at first for performance bottlenecks include:-

- Processor

- Memory

- Storage

There are other metrics we can look at, but it’s likely that in a high proportion of cases the bottleneck has been caused by one of the three main resources listed above. Looking at processor first, we can obtain graphs from vCenter for the lifetime of the test run (so please make sure you make a note of the start and stop times of the tests!). Export the information and select the processor, memory and datastore check boxes so we keep data to a minimum to start with.

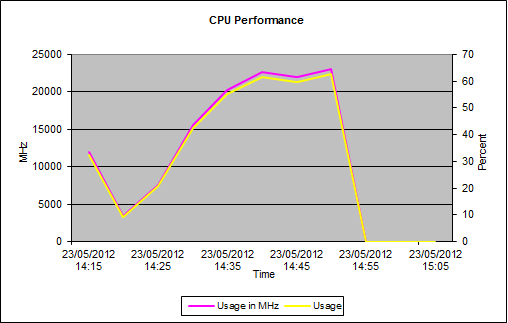

CPU Performance

Looking at the graph above from vCenter, we can see variable saturation of processor resource. The main takeaway from this result is that CPU utilisation never exceeds ~65%, so we can see quite clearly from the off that CPU is not the limiting factor in this particular test scenario.

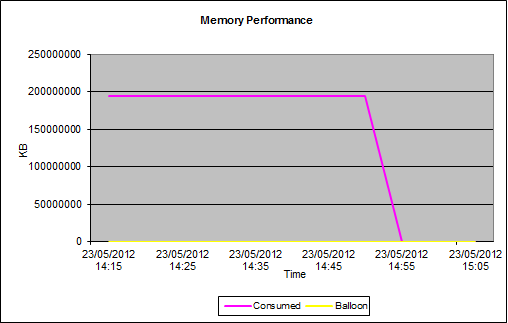

Memory Performance

To continue the investigation, we now need to take a look at the memory resource to see if this is the constraining resource. As we can see from the chart, again memory is not the issue. Although the memory usage hovers around maximum, it is a little below.

20GB of physical RAM is available in the ESXi host, and as we can see by the performance chart, memory is heavily utilised for most of the test but does not max out. So taking into account CPU and memory performance during the testing, we have enough spare capacity in these resources to service 100 virtual desktops. We’re making good progress in ruling out the performance bottleneck, but we haven’t found it yet! Onwards to the datastore performance charts!

Datastore Performance

Looking at the performance charts for the datastore, we can clearly see an issue with performance straight away. The chart shows high latencies for both read and write performance, in the worst case we can see a latency of 247ms for write operations to one datastore in use.

So the question here is, what is an acceptable disk latency? In broad terms, the following values are a reasonable rule of thumb :-

- Sub 10 ms – excellent, should be the target performance level

- 10-20 ms – indicates a problem, may cause noticeable application/infrastructure issues

- 20 ms or greater – indicates unacceptable performance, applications and services such as virtual desktops will exhibit significant performance issues

Depending on your workload, you may well see spikes in performance at the storage level. These spikes can be acceptable as by definition they are sporadic and rare and generally do not impact long term performance. Microsoft lists acceptable disk latency spikes for SQL Server as 50ms, for example. I don’t know I especially agree with this number, but they know SQL Server a lot better than I do!

Performance Conclusions

Looking at the performance charts, we can see that the disk is the bottleneck. The latencies at the disk level are quite severe, and would result in a much lower VSIMax value than what was originally planned for. If we can add bandwidth to the disk layer, we can improve the density of virtual desktops per hypervisor host. In this case, we had local SAS disks in a RAID1 configuration. Even though third party storage appliances were in use to try and improve throughput, the physical disks themselves could not sustain the level of performance required.

As such, the desktop pool was moved to SAN based storage, which was a Fibre Channel storage on a NetApp storage device. One LUN was configured to host the desktop pool datastore, in a one to one relationship, as per best practices. As the storage now in use is enterprise grade, we would expect the disk latencies to be significantly reduced. As mentioned before, LoginVSI can be a really useful for tool modeling configuration changes and their impact and this is a good example. We’ve already proved that CPU and memory are not fully utilised, and that the disk latencies are causing a lower than expected VSIMax value.

The performance graph for a virtual desktop datastore on the NetApp datastore shows a much reduced latency of (on average) under 1 ms. As stated previously, any latency under 10ms is excellent, anything sub 1 ms is jet propelled! Now we have identified and removed the performance bottleneck, our VDI solution will scale to the required number of 100, as per the original design. Obviously CPU, memory and datastore are only a subset of the possible performance metrics we could have obtained, but any bottleneck is most likely to be around those resources.

Also, we could look at such metrics as network, but we’d be most likely to look at those metrics if for example mouse movement was delayed, or keystrokes were slow. In a LoginVSI test scenario as the virtual desktops are designed to be “stand alone”, there should be minimal network traffic anyway.

Hopefully the two posts on LoginVSI have provided some guidance on how you can benchmark your environment, and also identify and rectify any bottlenecks that prevent you from scaling to the designed limits. I’d quite like to present this topic as a slide deck at a VMUG somewhere, sometime. Please let me know if that’s something you’d like to see!